# The Global Race to Regulate AI: How Nations Are Shaping the Future of Technology

## Introduction

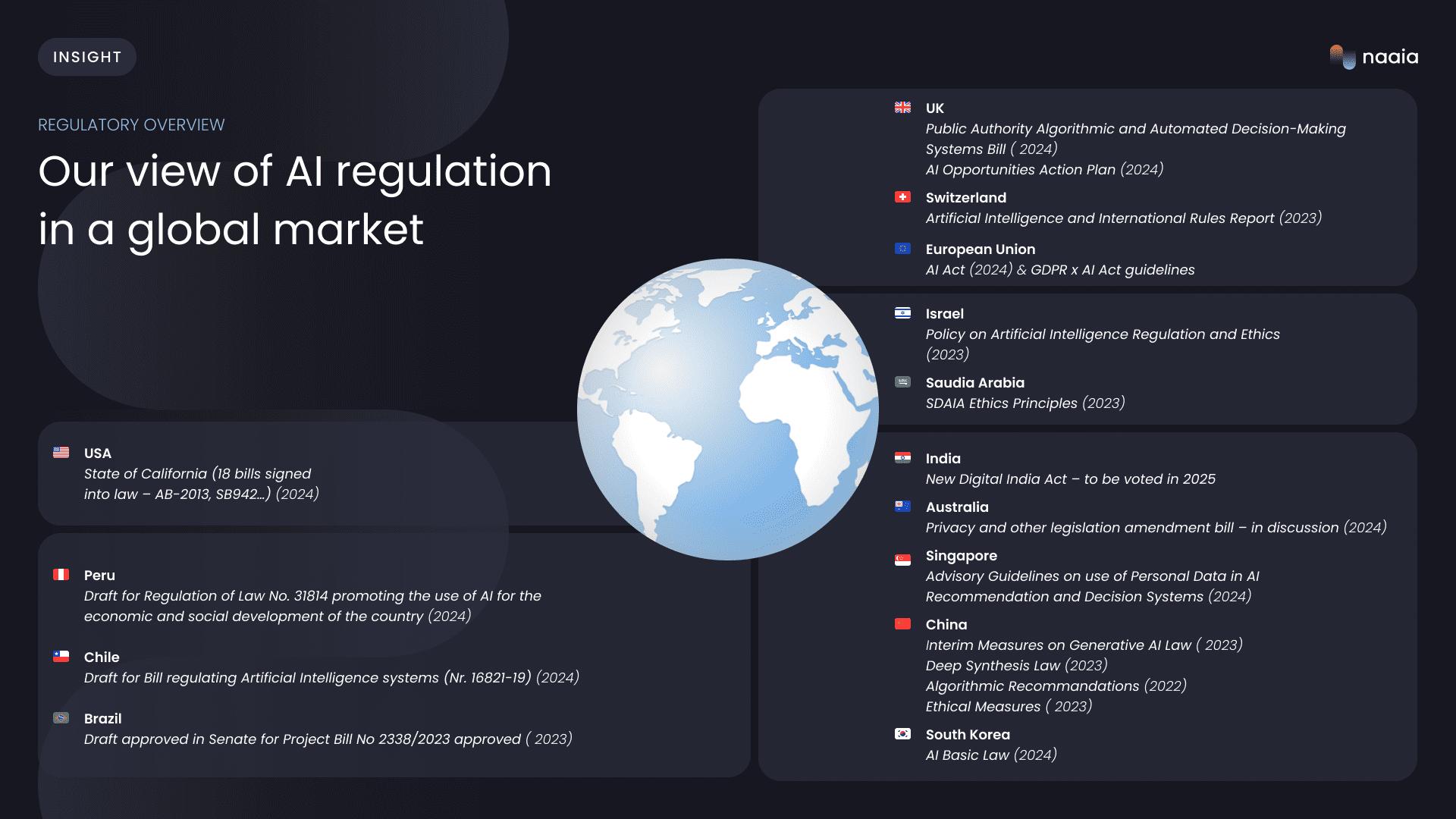

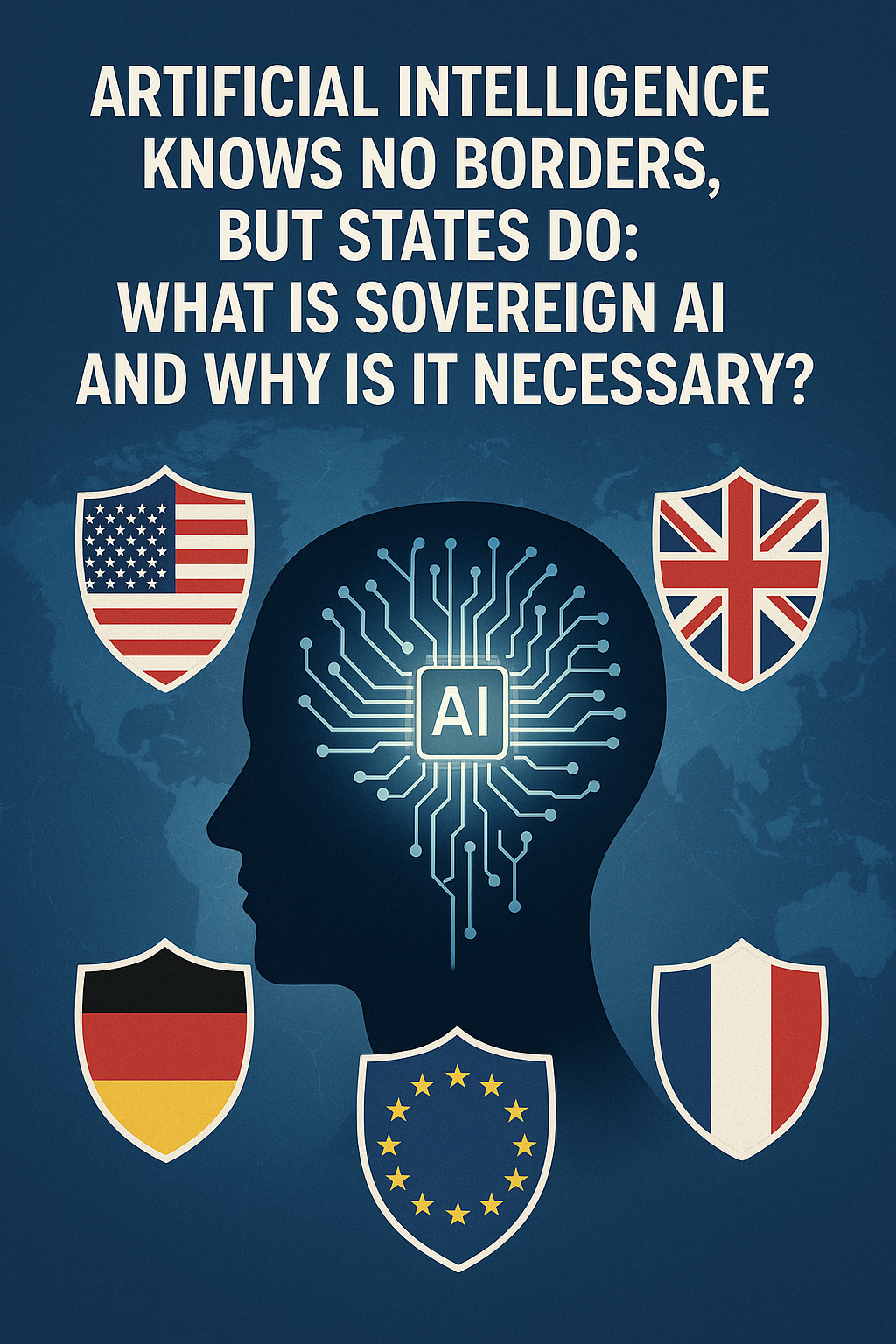

Artificial intelligence is no longer the stuff of science fiction; it is a pervasive, powerful force reshaping economies, societies, and even the very nature of human interaction. From the algorithms that curate our news feeds to the complex systems that guide autonomous vehicles, AI is rapidly becoming the foundational technology of the 21st century. Yet, as its capabilities expand at an exponential rate, a sense of profound unease has grown in parallel. Questions of bias, privacy, accountability, and control have moved from academic seminars to the top of national security agendas. In response, a new global competition has emerged—not for technological supremacy alone, but for regulatory dominance. The world's major powers are now engaged in a high-stakes race to write the rules for AI, each vying to encode its own values and strategic interests into the digital architecture of the future. This is more than a policy debate; it is a battle for who will lead the AI century.

## The EU Takes the Lead: Understanding the AI Act

The European Union has firmly positioned itself as the world's digital standard-setter. True to its "Brussels Effect" playbook—whereby EU laws become global norms—it has crafted the most comprehensive and prescriptive AI regulation to date: the AI Act. Finalized in late 2023 and coming into force in stages, the Act is built on a risk-based pyramid. At the apex are "unacceptable risk" systems, such as social scoring by governments or real-time biometric surveillance in public spaces, which are banned outright. The next tier, "high-risk" AI, covers applications in critical sectors like medical devices, judicial systems, and infrastructure management. These systems face stringent requirements, including rigorous testing, human oversight, and data transparency, before they can enter the market. A lower tier addresses "limited risk" AI, like chatbots and deepfakes, mandating that users be informed they are interacting with a machine or synthetic content. Finally, "minimal risk" applications, the vast majority, are left largely unregulated. The AI Act is extraterritorial, meaning any company, anywhere in the world, that wants to offer AI services within the EU's market of 450 million consumers must comply. Brussels is betting that its first-mover advantage and market size will make its framework the de facto global standard.

## The US Response: Balancing Innovation and Regulation

The United States has approached AI governance with a more cautious, sector-specific, and innovation-focused philosophy. Wary of stifling the dynamism of Silicon Valley, Washington has historically favored voluntary frameworks and market-led solutions over sweeping, EU-style legislation. This changed significantly with the October 2023 Executive Order on Safe, Secure, and Trustworthy AI, the most significant US policy statement on the subject. The order directs federal agencies to establish new standards for AI safety and security, protect privacy, and advance equity. It notably requires developers of the most powerful AI models to report their safety test results to the government. However, the US approach remains a complex patchwork. It relies heavily on executive action and agency rulemaking rather than a single, overarching law. The core debate in Washington is how to thread the needle: to erect guardrails against catastrophic risks without ceding its technological leadership to global competitors. The American model is one of continuous adaptation, promoting a pro-innovation environment while reacting to emerging threats, a stark contrast to the EU's preemptive, comprehensive legalism.

## China's AI Strategy: Control and Competition

China's approach to AI regulation is a direct reflection of its broader techno-nationalist ambitions and its system of state capitalism. Beijing's goal is twofold: to become the world's premier AI power by 2030 and to ensure the technology reinforces, rather than challenges, the state's authority. Its regulatory landscape is developing rapidly, characterized by a series of targeted measures aimed at specific AI applications. The most prominent of these are the 2023 "Interim Measures for the Management of Generative Artificial Intelligence Services." These rules require generative AI providers to obtain a license, ensure content aligns with "core socialist values," and prevent the generation of subversive or false information. Data provenance and labeling of AI-generated content are also mandatory. Unlike the EU's market-focused regulation or the US's innovation-first stance, China's framework is fundamentally about information control and social stability. The state is both a key investor and the ultimate arbiter, using regulation as a tool to steer its national tech champions while maintaining a tight grip on the digital sphere.

## The Regulatory Divergence: Implications for Global Tech

The trifurcation of AI governance—the EU's rights-based legalism, the US's market-driven pragmatism, and China's state-centric control—creates a complex and fragmented global landscape for technology companies. A developer in London, a startup in Austin, or a lab in Shenzhen must now navigate a daunting maze of compliance obligations. This regulatory divergence could lead to a "splinternet," where digital services are balkanized, and AI models must be retrained or reconfigured for different legal regimes. For global tech giants, the cost of compliance will be immense. For smaller innovators, the barriers to entry could become insurmountable, potentially stifling competition. This fragmentation also complicates international collaboration on AI safety research and the establishment of shared norms for responsible deployment, making it harder to address global challenges like AI-driven disinformation or the risk of autonomous weapons.

## AI Governance as Geopolitical Strategy

The race to regulate AI is not just about managing risk; it is a central arena for 21st-century geopolitics. The choice of a regulatory model is a declaration of values. The EU champions a human-centric vision, placing individual rights and democracy at the core of its framework. The US model implicitly prioritizes economic dynamism and technological leadership as the bedrock of its global influence. China's approach subordinates all other concerns to state security and national sovereignty. By exporting their respective regulatory models, these powers aim to shape the global technology ecosystem in their own image. A country that adopts the EU's AI Act is also aligning itself with a specific bloc of values and a particular economic sphere. Similarly, nations that embrace China's model of techno-authoritarianism are making a clear geopolitical choice. Control over the standards that govern AI is a new, potent form of soft power.

## The Stakes: Who Will Lead the AI Century?

The long-term consequences of this regulatory race are profound. The framework that becomes the global benchmark will not only determine the future of the technology industry but also influence the global balance of power. If the EU's comprehensive model prevails, it could usher in an era of more responsible, rights-respecting AI, but potentially at the cost of slower innovation. If the US's more laissez-faire approach dominates, it might accelerate technological breakthroughs but risk greater social disruption and unforeseen crises. If China's state-controlled model spreads, it could normalize digital authoritarianism and fragment the global internet. The ultimate question is which nation or bloc will successfully project its vision for the future of technology onto the rest of the world. The answer will determine not just which companies thrive, but which values—openness or control, democracy or authoritarianism, individual rights or state power—define the coming AI century.

## Conclusion

The global race to regulate AI has just begun, but the contours of the competition are clear. The EU, US, and China are not merely writing laws; they are embedding their core principles into the code that will run our future. The outcome of this contest will have lasting implications for innovation, geopolitics, and the very fabric of society. As nations navigate this uncharted territory, the challenge will be to find a path that both fosters technological progress and safeguards our most fundamental human values.