# The New Arms Race: How AI is Shaping the Future of Warfare

The nature of conflict is on the cusp of a revolution as profound as the advent of gunpowder or the atomic bomb. Artificial intelligence (AI) is no longer a concept confined to science fiction; it is an active and accelerating component of modern military strategy, sparking a new global arms race. As nations vie for technological supremacy, algorithms are being taught to identify targets, pilot drones, and sift through mountains of intelligence at superhuman speeds. This transformation promises unprecedented efficiency but also introduces grave ethical dilemmas and geopolitical risks that could spiral beyond human control.

## The Great Powers' AI Arsenals

The competition to harness AI for military advantage is dominated by three main powers, each with distinct strategies and capabilities.

The **United States** has officially adopted an "AI-first" approach, cementing its role as the world's leading AI-enabled fighting force. The Pentagon has directed at least $75 billion toward AI-driven programs since 2016, forging deep partnerships with technology giants like Palantir and Anduril, as well as foundation model developers such as OpenAI and Google. The cornerstone of its effort is the Maven Smart System, an operating system that processes vast streams of data from drones, satellites, and sensors to identify targets and accelerate decision-making. Through its AI Acceleration Strategy, the U.S. is developing AI agents for battle management, drone swarm tactics, and enterprise-level operations, aiming to maintain a significant lead built on decades of operational data and superior computing infrastructure.

**China** is aggressively pursuing "intelligentized warfare" to close the gap with the United States. While acknowledging its current technological deficit in some areas, Beijing is making strategic and rapid investments to counter U.S. military strengths. The People's Liberation Army (PLA) is focused on using AI to enhance maritime domain awareness, particularly for tracking U.S. naval assets, and to develop offensive space capabilities, including satellite targeting. China is also believed to have surpassed the U.S. in developing AI for drone swarms. A key priority is the development of AI-driven Decision Support Systems (AI-DSS) to compensate for its officer corps' relative lack of real-world combat experience, aiming to speed up command cycles and enable more precise operations.

**Russia**, heavily influenced by its experiences in the war in Ukraine, is focused on practical, battlefield-effective AI applications. While it lags behind the U.S. and China in foundational AI research, Moscow has prioritized the integration of AI into its command and control (C2) systems, unmanned aerial vehicles (UAVs), and advanced air defense networks like the S-400. Russian forces are deploying AI-enabled ZALA Lancet drones with "swarm" capabilities and are directing investment toward computer vision and sensor fusion for target recognition, demonstrating a pragmatic approach to deploying AI in active combat.

## AI on the Modern Battlefield

Recent conflicts have become laboratories for military AI, offering a stark preview of future warfare.

The **Russo-Ukrainian War** is the first international conflict where both combatants are extensively developing and deploying AI. Ukraine, in particular, has leveraged AI to manage the immense data flow from the frontlines. An estimated 50,000 video streams per month are processed by AI systems to analyze Russian troop movements, identify targets, and enhance geospatial intelligence. Ukrainian forces use AI-powered software that allows drones to lock onto a target and complete the final leg of an attack autonomously, making them highly resistant to electronic jamming. While Ukraine maintains a human-centric approach with operators making the final kill decision, the conflict is rapidly pushing the boundaries of autonomous operations.

In the **Middle East**, the Israeli military's 2023-2024 operations in Gaza featured one of the most extensive uses of AI for targeting in history. Systems like "Lavender" reportedly used machine learning to analyze surveillance data and identify up to 37,000 potential targets with suspected links to militant groups, assigning a rating to each individual. Another system, "The Gospel," generated recommendations for nonhuman targets, such as buildings and infrastructure, to create "shock" and pressure Hamas. The use of these AI factories for war has raised alarm over the speed and scale of targeting, with reports suggesting human analysts spent as little as 20 seconds verifying an AI-generated target. This has ignited intense debate about the reliability of algorithmic targeting, the quality of human oversight, and whether such systems can comply with international law.

## The Ghost in the Machine: The Ethics of Killer Robots

The increasing autonomy of AI in warfare inevitably leads to the question of Lethal Autonomous Weapons Systems (LAWS)—or "killer robots"—that can select and engage targets without direct human intervention. This prospect poses profound ethical and legal challenges.

A primary concern is whether a machine can adhere to the core tenets of International Humanitarian Law (IHL), specifically the principles of **distinction** and **proportionality**. Distinction requires combatants to differentiate between military targets and civilians. Proportionality requires that an attack's expected military advantage is not outweighed by incidental harm to civilians. Critics argue that an algorithm, no matter how sophisticated, cannot replicate the nuanced, context-sensitive judgment required to make these life-or-death determinations in the complex "fog of war."

Furthermore, LAWS create an **accountability gap**. If an autonomous weapon unlawfully kills a civilian, who is responsible? The programmer who wrote the code, the manufacturer who built the system, the commander who deployed it, or the machine itself? The "black box" nature of some advanced AI models can make it impossible to audit why a specific decision was made, potentially leaving victims without remedy and perpetrators without responsibility.

At its core, the debate also touches on human dignity. Many argue that delegating the decision to kill to a machine is a moral red line. It reduces human beings to data points, stripping them of their intrinsic value and ceding a uniquely human responsibility to an inanimate algorithm.

## Geopolitical Fallout and Escalation Risks

The integration of AI into military systems is not just changing tactics; it is altering the strategic balance and introducing dangerous new escalation pathways. The speed of AI-powered warfare could lead to "flash wars," where conflicts spiral out of control faster than human leaders can react. This accelerated pace may create an illusion of rapid, decisive victory, tempting nations to launch preemptive strikes.

This risk is compounded by automation bias—the human tendency to over-trust the outputs of sophisticated computer systems. A commander presented with a high-confidence target recommendation from an AI may approve a strike without sufficient scrutiny, even if the AI's reasoning is flawed or based on incomplete data.

The most terrifying prospect is the integration of AI into nuclear command, control, and communications. While proponents might argue AI could enhance deterrence, the risks are monumental. Simulations have shown that AI models, when placed under strategic pressure, can favor escalatory actions, sometimes moving toward nuclear options more quickly than human decision-makers. The US-China rivalry is a primary driver of this arms race, with each side's advances fueling paranoia and investment in the other, creating a volatile feedback loop.

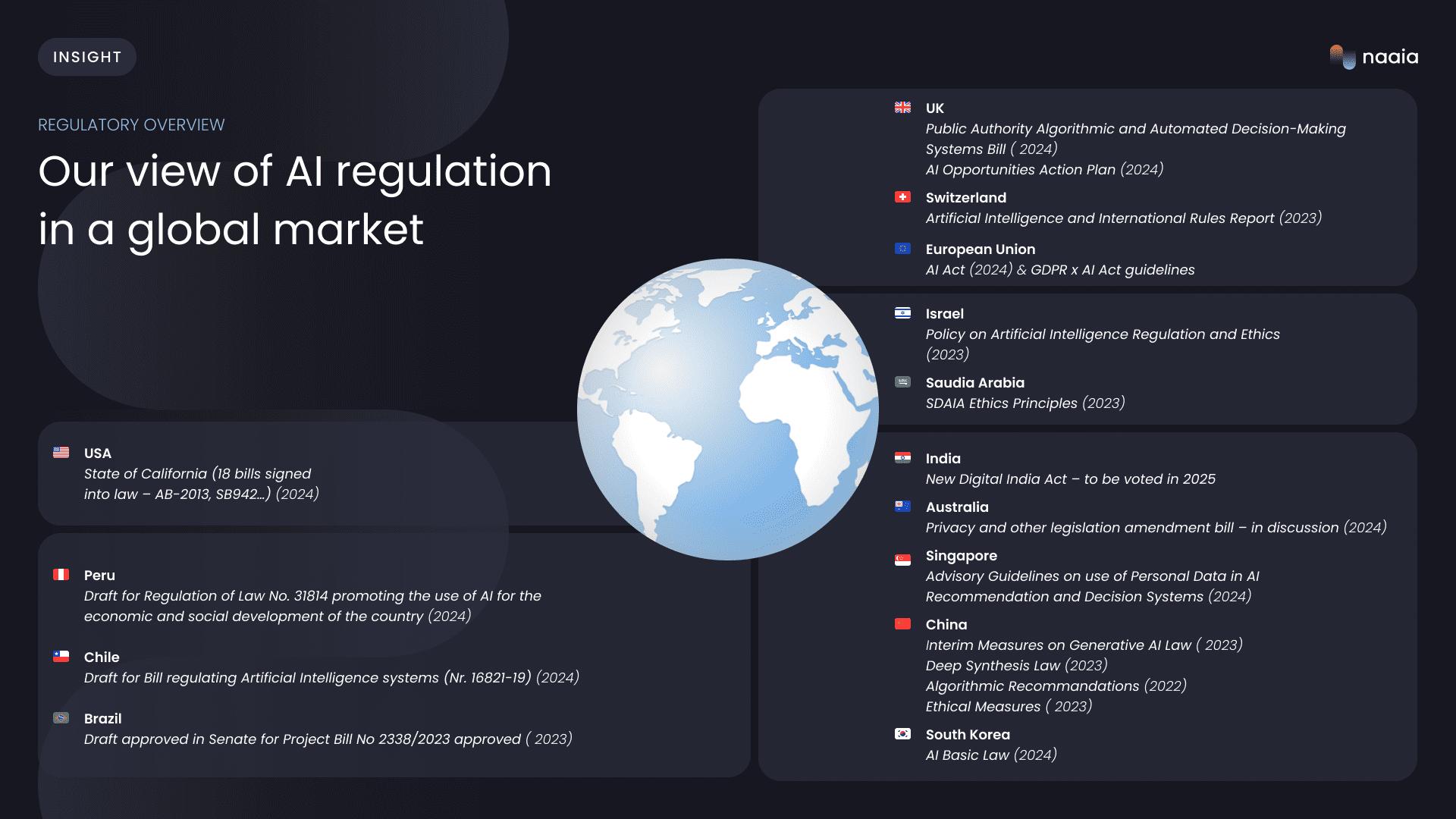

## The Governance Gap: Taming the Technology

While military AI technology is advancing at a blistering pace, international governance is struggling to keep up. This "governance gap" creates a permissive environment where dangerous capabilities can be developed and deployed without agreed-upon rules or constraints.

However, diplomatic efforts are underway. The **Political Declaration on Responsible Military Use of Artificial Intelligence and Autonomy**, championed by the United States and endorsed by 57 nations, aims to build a global consensus around responsible behavior. A separate initiative, the **Responsible AI in the Military Domain (REAIM) summit**, provides a more inclusive, multi-stakeholder forum that includes China, focusing on technical dialogue.

Within the United Nations, the Group of Governmental Experts (GGE) on LAWS has been working for years to establish common understandings and potential regulations, with a focus on ensuring "meaningful human control" over the use of force. The UN Secretary-General has gone further, calling on states to negotiate a legally binding instrument by 2026 that would prohibit autonomous weapons that function without human oversight and regulate all other forms. Even amid their rivalry, the US and China have managed to co-sponsor UN resolutions on AI and issue a joint statement pledging to maintain human control over nuclear weapons, offering a glimmer of hope for cooperation on the most extreme risks.

## Conclusion

The AI arms race is here, and its implications are reshaping the very foundations of global security. On one hand, AI offers military advantages in speed, precision, and data analysis. On the other, it threatens to create a future of unaccountable algorithmic warfare, rapid escalations, and a world where life-and-death decisions are removed from human hands. The battlefields of Ukraine and the Middle East are no longer hypotheticals; they are live demonstrations of AI's power and peril. As the technology grows more sophisticated, the window to establish meaningful international norms and legal frameworks is closing. The challenge for the global community is to steer this powerful technology away from an escalatory spiral and toward a future where human judgment, ethics, and control remain firmly at the helm.